GPT Image 2 Is Live on Archly: Better Image Generation for Architectural Visualization

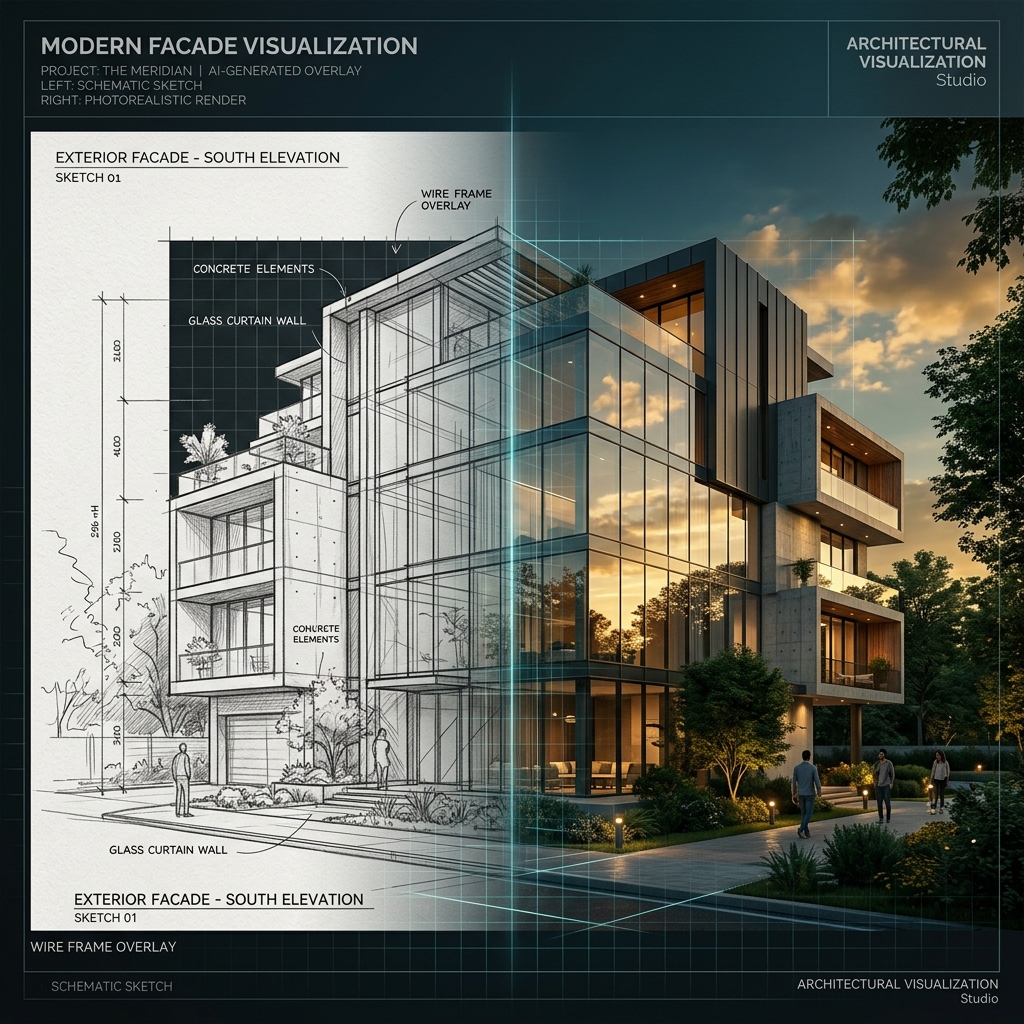

GPT Image 2 is now live on Archly. For architects, the important change is not another model name in a dropdown. It is a more capable image generation and editing layer inside a workflow built around sketches, references, materials, facades, interiors, and presentation images.

OpenAI describes GPT Image 2 as its state-of-the-art model for fast, high-quality image generation and editing, with text and image input and image output. Inside Archly, we are using that capability where it matters for architectural visualization: clearer prompt fidelity, stronger reference handling, and cleaner iterative edits.

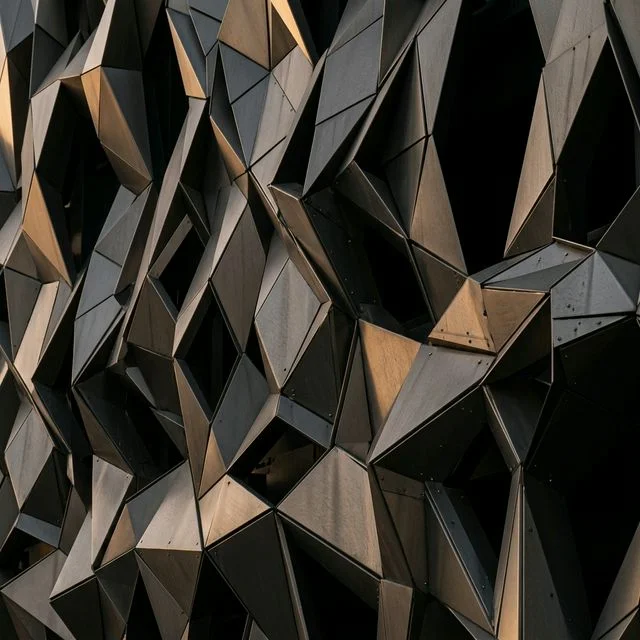

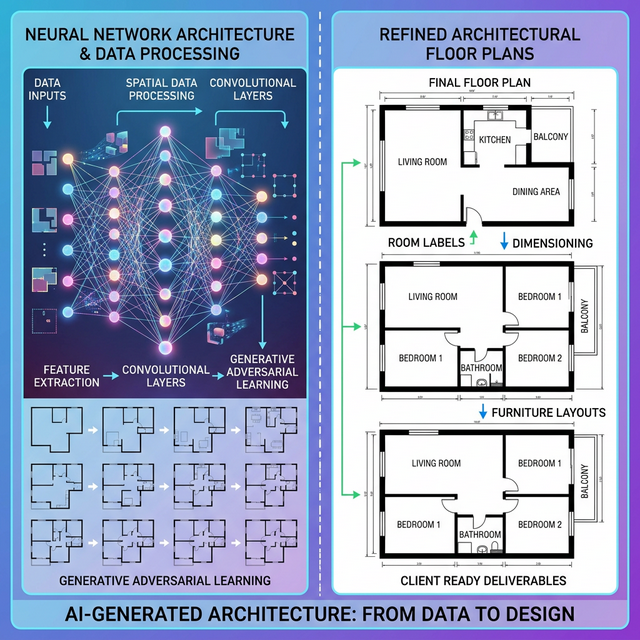

The practical use cases are direct. Upload a sketch or an existing render, test a facade material, shift the atmosphere of an interior, explore daylight conditions, or generate a sharper concept image for a client conversation. Archly keeps the workflow architecture-specific so the model is not treated as generic image entertainment.

This does not replace architectural judgment. Plans, spatial logic, regulations, detailing, and buildability still belong to the architect. The value of GPT Image 2 on Archly is speed around visual decision-making: more options, better edits, and less friction between an idea and a presentable render.

You can try the new model now at archly.ai. We will keep refining how Archly routes image models for architectural tasks, so architects spend less time fighting prompts and more time developing the design.